Chapter 8 of 8

Writing

The final major step is writing up the review—turning your protocol, searches, screening decisions, extraction tables, and synthesis plan into clear Methods and Results sections (and, in parallel, Discussion and other front-matter as required by your target venue). The manuscript should mirror what you actually did in AIPRA and what you promised in your registered protocol and evidence synthesis plan.

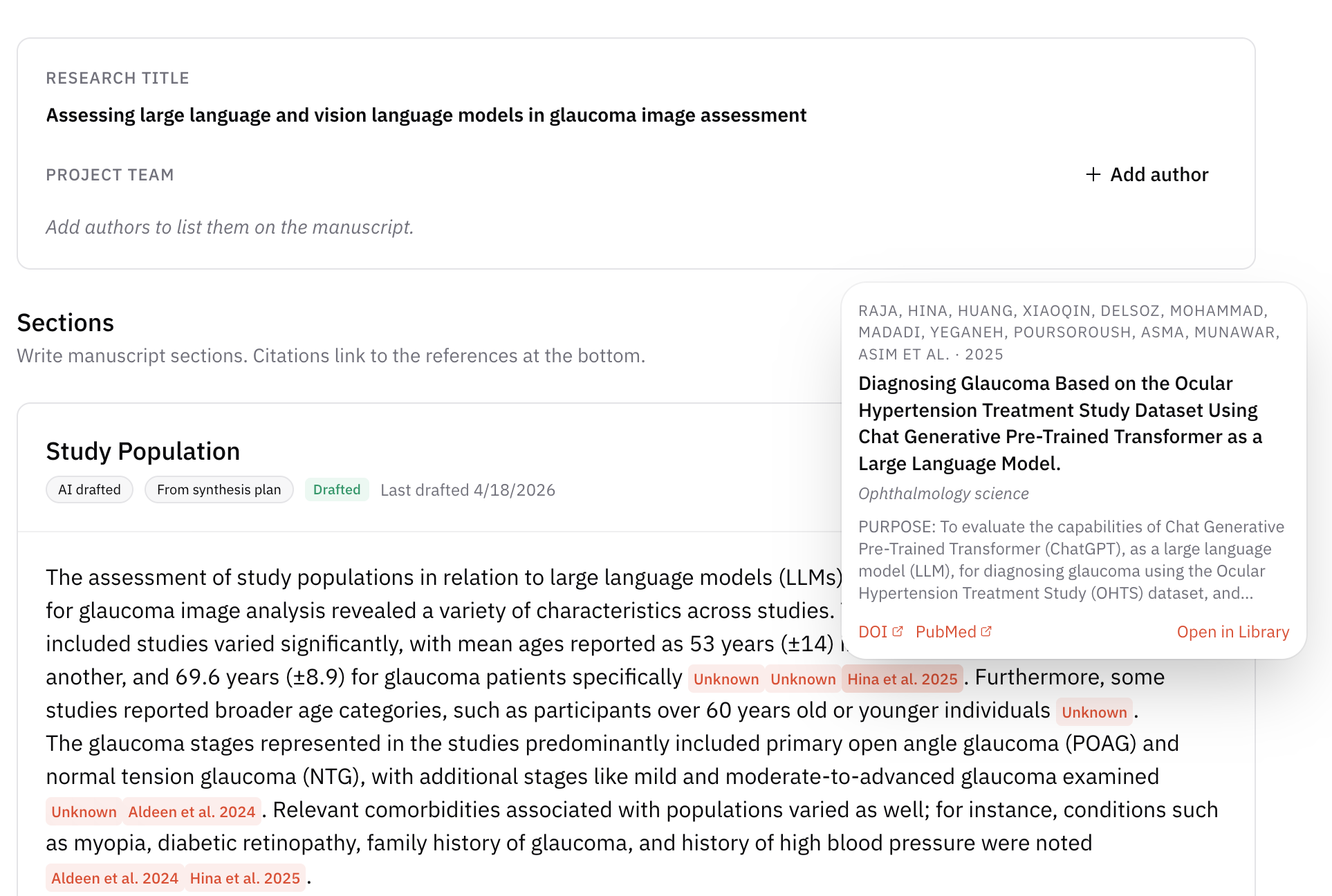

AIPRA can support drafting by generating section text from your project artifacts. When AI-assisted text is offered, the platform emphasizes traceability: claims can be tied back to specific workflow records, extracted fields, or sources so reviewers and readers can verify them—reducing the risk of unsupported statements or hallucinated details.

Writing a systematic review today is as much about transparency as about the numeric results. Expectations for a high-quality review increasingly include a reproducible audit trail: enough detail that another team could understand, and often approximate, your process from the paper and its supplements alone.

Methods — the reproducibility blueprint

A reader with your references and appendices should be able to reconstruct how you found, selected, appraised, and analyzed evidence. The Methods section is that blueprint.

Protocol and registration

State where the review was registered (for example PROSPERO or an equivalent) and cite the record. Describe any material deviations from the published protocol and why they occurred, including changes to eligibility, outcomes, or synthesis that were not prespecified.

Search strategy

Report databases, interfaces, dates, and any grey-literature or supplementary approaches. Current good practice is to provide the full search string for at least one major database in the manuscript or appendix so others can reproduce or adapt it (Page et al., 2021). Structure supplementary search reporting using PRISMA-S (PRISMA-Search) where applicable.

Eligibility criteria

Be explicit about inclusion and exclusion rules—for example language restrictions, date limits, study designs (e.g. “RCTs only”), populations, interventions or exposures, comparators, and outcomes—so they map cleanly onto your screening forms and PRISMA counts.

Quality assessment

Name the risk-of-bias or quality tool (e.g. RoB 2 for randomized trials, Newcastle–Ottawa Scale for some observational designs) and describe how many reviewers applied it, any piloting, and how disagreements were resolved.

Results — transparency through flow

Present results in the same order readers experience the review: from records identified to studies included, then characteristics, then synthesized findings—not only as a flat list of effect estimates.

PRISMA flow diagram

A PRISMA flow diagram is expected in most systematic reviews: it should account for the path of every record from initial search through deduplication, screening stages, full-text decisions, and final inclusion—aligned with the numbers you report in text and tables.

Study characteristics

Summarize included studies in a table keyed to your extraction fields. Use the narrative to highlight patterns, heterogeneity, and outliers rather than restating every row.

Synthesis of results

If you conducted a meta-analysis, report models, heterogeneity, and sensitivity analyses consistent with your analysis plan. If pooling was not appropriate, follow SWiM (Synthesis Without Meta-analysis) so narrative synthesis remains structured and auditable (see also the Evidence synthesis chapter).

Manuscript support in AIPRA

AIPRA’s manuscript workflow is designed to combine collaborative writing with links back to the underlying project data, so drafts stay aligned with extraction outputs and the rest of the review trail.